AI Market Research Tool: How to Turn a Messy Market Into a Decision

An AI market research tool is useful when it compares competitors, customer pain, pricing, and market signals in one report you can actually challenge.

An ai market research tool is not valuable because it writes faster. It is valuable because it helps you separate signal from narrative before you make a product, pricing, or positioning decision. Most teams do not have a market research problem. They have a synthesis problem. Customer complaints live in Reddit threads and reviews. Pricing clues live on competitor pages and old snapshots. Market demand shows up in job posts, filings, launch pages, and earnings calls. By the time you collect all of it, you have 40 tabs and no decision.

That is exactly why this category is heating up. On Hacker News today, a lot of the discussion was orbiting the same tension from different angles: trust in tooling, privacy fallout, and whether modern AI workflows are actually making people more confident than correct. That is the right frame for market research too. A polished answer is not the same as a defendable market view.

Quick verdict: The best ai market research tool does not just summarize a category. It pulls evidence from reviews, pricing pages, public discussions, and filings, then keeps the contradictions visible enough that a team can actually challenge the conclusion before betting budget on it.

Fast path: Use this page if you need to pressure-test category entry, pricing, or positioning quickly. Jump to the 2-minute buyer scan, the real workflow, or the output shape.

| If your decision is... | Your first source stack should be... | What usually goes wrong |

|---|---|---|

| Entering a category | Reviews, forums, pricing pages, hiring signals, launch pages | Teams confuse hype with reachable demand |

| Repositioning against competitors | Competitor messaging, comparison pages, reviews, Reddit, win-loss notes | Teams copy the category story instead of finding the dissatisfaction gap |

| Defending a pricing or GTM bet | Pricing pages, packaging, customer complaints, public proof, earnings or investor narratives | Teams mistake polished packaging for willingness to pay |

The workflow implication: an ai market research tool has to triangulate independent sources early, because buyers do not trust a polished homepage story on its own.

Those numbers come from a 2026 Corporate Visions roundup summarizing recent G2 and 6sense buyer-behavior research, and they reinforce the core market-research problem: buyers do not trust polished homepage narratives by themselves, so a usable ai market research tool has to triangulate across independent sources instead of writing one smooth story from one source type.

AI market research tool: what to check in the first 2 minutes

The best ai market research tool does not remove ambiguity. It makes the ambiguity legible enough to challenge before you commit budget.

| If the tool shows... | That usually means... | Decision value |

|---|---|---|

| Reviews, Reddit, pricing pages, and filings in one report | It can triangulate beyond vendor-controlled narratives | Better for category-entry and positioning bets |

| Confidence labels or source counts on claims | The output can be challenged instead of trusted blindly | Better for deck-ready recommendations |

| Contradictions called out explicitly | The workflow is preserving mixed signals instead of smoothing them away | Better for avoiding false-positive market conviction |

| Only one polished narrative with no source tension | You are getting synthesis theater, not decision support | Weak for high-stakes strategy work |

That quick scan matters because most teams are not buying "AI" in the abstract. They are buying fewer bad bets. If the artifact does not make disagreement visible, the tool is already hiding the thing you most need to see.

What an ai market research tool should actually do

A real ai market research tool should help you answer four decision questions:

- Is the market real enough to matter? You need demand signals, not category slogans.

- What are buyers actually struggling with? Review sites, community threads, and churn complaints matter more than polished homepage copy.

- How are competitors packaging the solution? Pricing, feature bundles, and sales language reveal who they think the buyer is.

- Where is the contradiction? If investor decks say momentum is strong but customers keep reporting implementation pain, that tension is the insight.

Most tools stop after question three. They summarize the visible landscape. Good market research goes one step further and forces the contradictions into view. That is where strategy comes from.

Why most market research AI outputs are too smooth

The failure mode is not usually missing information. It is false coherence.

A one-model workflow tends to compress everything into one calm narrative. The report sounds finished, so teams stop interrogating it. But market research is rarely clean. Buyer urgency can be real while willingness to switch is weak. A category can be growing while margins collapse. A competitor can have strong awareness and weak retention at the same time.

If your ai market research tool cannot preserve those mixed signals, it encourages the exact mistake teams make before a bad launch: overcommitting to a story that the evidence only half supports.

The real failure mode: a smooth market-research report can feel more decision-ready than it actually is. Good research artifacts do not just sound coherent. They show you where the case is strong, where it is weak, and where a human still needs to push.

How to use an ai market research tool for real decisions

The best workflow starts with a decision, not a generic prompt.

Bad prompt: Research the market for AI note-taking tools.

Better prompt: Evaluate whether AI note-taking is still attractive for a new product in 2026. Compare buyer pain, switching triggers, pricing patterns, competitor crowding, and signs of defensibility. Separate strong evidence from weak signals.

That instruction forces the system to gather decision-grade evidence instead of writing a category explainer.

A practical market research sequence looks like this:

- Customer pain: Pull repeated complaints from reviews, forums, and social discussion. This reveals what buyers hate enough to switch for.

- Competitor packaging: Compare pricing, bundles, promises, and positioning. This shows where the market is crowded or undersold.

- Market validity: Pull public growth, funding, hiring, and partnership signals. This distinguishes a real market from a hype pocket.

- Contradictions: Flag where buyer sentiment and company messaging diverge. This is usually where the strategic insight lives.

The sequence matters. Customer pain and contradiction checks usually carry more strategic weight than polished category copy because they reveal what the market says privately versus publicly.

A good ai market research tool pulls each source type for a different reason. If everything comes from one narrative surface, you are not doing market research yet.

That is where an ai market research tool earns its keep. It should not just gather sources. It should return a map of where the opportunity is credible, where it is thin, and what would still require manual calls or interviews.

What the output should look like

If the output is just a wall of prose, you are still in note-taking mode.

A useful market-research report should include:

- Executive takeaway: what the market rewards, what it punishes, and what remains uncertain

- Buyer pain themes: repeated complaints and unmet needs

- Competitor comparison: positioning, pricing, proof, and obvious gaps

- Confidence labels: high-confidence findings versus weaker interpretation

- Open questions: what still needs customer interviews or firsthand validation

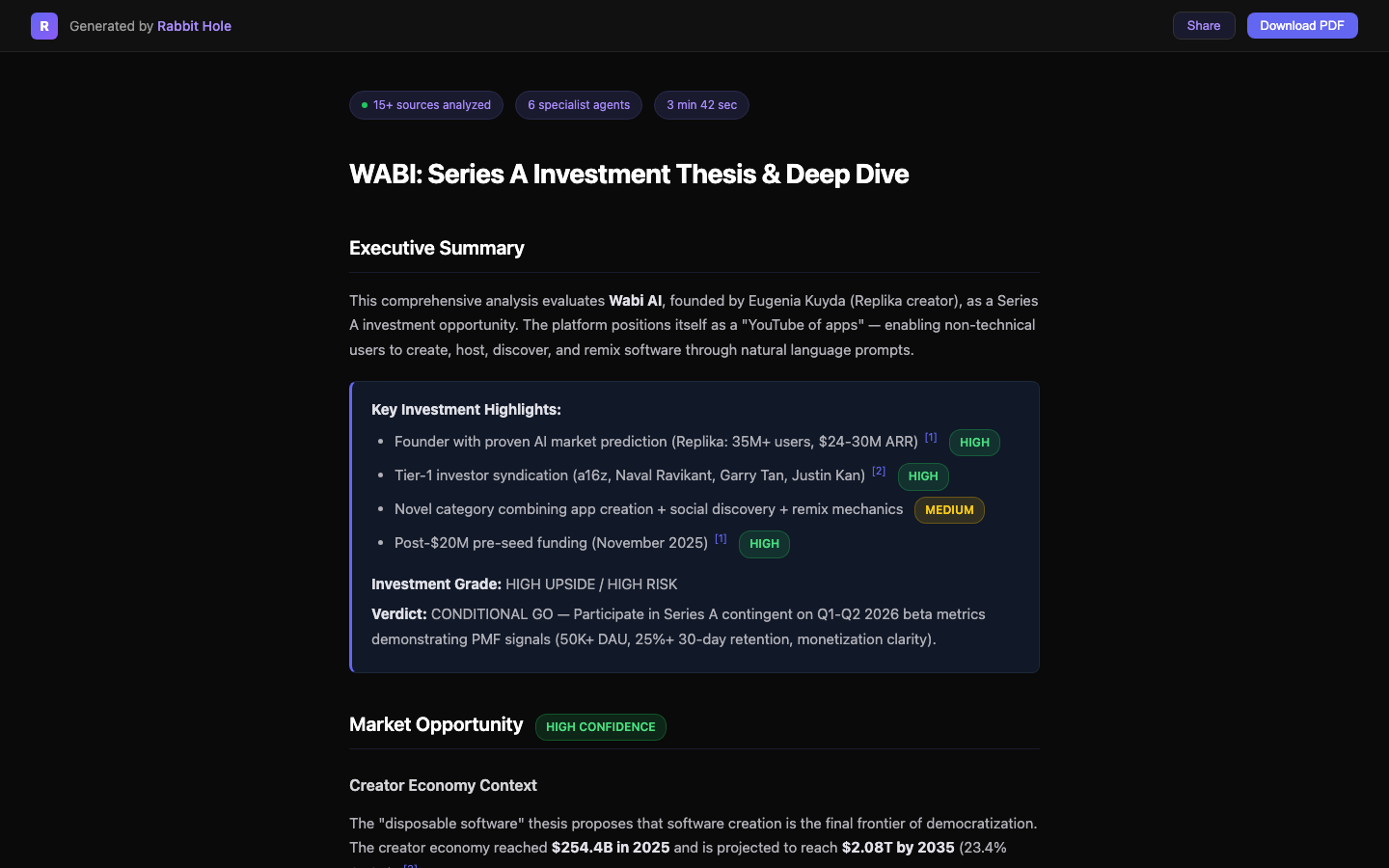

Here is what that looks like in practice inside Rabbit Hole. This is a real report artifact, not a mockup:

The important detail is not the formatting. It is the visibility of confidence. Market research gets dangerous when every sentence looks equally trustworthy. A good artifact makes it obvious which claims are supported by multiple sources and which ones are still directional.

Where an ai market research tool is most useful

The strongest use cases are the moments when a team is about to commit resources:

AI market research tool for new category entry

If you are deciding whether to enter a category, the job is not to estimate TAM with theater-grade precision. It is to understand whether the category has urgent pain, reachable buyers, and a gap that incumbents are leaving open.

AI market research tool for competitor positioning

If your team keeps saying "we need better positioning," the real need is usually better evidence. You need to know which claims competitors repeat, which proof points they lean on, and where buyers remain unsatisfied. That is how you find language that is both true and differentiated. For a more manual version of that process, read Competitive Intelligence Without the Spyware Budget.

AI market research tool for high-stakes recommendations

Consultants, operators, and founders need reports they can forward. If the work is going into a deck, partner memo, launch brief, or board discussion, the output has to be checkable. That is the line between "interesting" research and useful research. If you want a stricter verification layer, read How to Verify AI Research Output. If you are evaluating a company instead of a market, AI Due Diligence is the adjacent workflow.

FAQ: choosing an ai market research tool

What makes an ai market research tool credible?

A credible ai market research tool shows where claims came from, how many independent sources support them, and where the evidence is still thin. If every sentence looks equally certain, the tool is flattening the research problem instead of helping you reason through it.

Can an ai market research tool replace customer interviews?

No. It can narrow the field, surface repeated pain themes, and show where buyers are already signaling frustration or urgency. But it cannot fully replace direct conversations when the decision involves roadmap, pricing, or enterprise rollout risk.

What is the difference between market research AI and competitor analysis AI?

Market research AI asks whether a category is attractive and where demand is moving. Competitor analysis AI asks how specific players are packaging the solution and where they are vulnerable. In practice, teams need both views together. That is why AI Competitor Analysis and AI Due Diligence sit next to this workflow.

Sources

- Corporate Visions: 2026 B2B buyer behavior roundup — summary of recent G2 and 6sense buyer-behavior findings used in the shortlist and trust section above.

- G2: 2025 Buyer Behavior Report — evidence on how B2B software buyers research vendors.

- 6sense: The B2B Buyer Experience Report — evidence on shortlist formation and hidden buying cycles.

Why Rabbit Hole fits this workflow

Rabbit Hole is useful as an ai market research tool because it treats market research as a multi-source evidence problem, not a single-answer problem. It searches different source types in parallel, keeps contradictions visible, and returns a structured report with confidence ratings and reusable artifacts.

That matters when the decision is expensive. You do not need a prettier summary. You need a market view you can defend when someone asks, "What is this actually based on?"

If that is the standard, try Rabbit Hole. It is built for teams that need market research they can challenge, share, and act on.

Related Articles

The 2026 Buyer's Guide to AI-Powered Research Assistants

The best ai-powered research assistant in 2026 depends on whether you need a fast answer, a literature workflow, or a report you can actually defend after the meeting.

ChatGPT Deep Research vs Perplexity vs Rabbit Hole: Which One Cites Sources That Actually Exist?

If a deep research tool gives you a polished paragraph with one dead link or one unsupported claim, the report is already compromised. Here is the citation test that matters.

AI Patent Search: From IPC Code to Cited Report in 5 Minutes

Patent search is not one query. It is text, classification, citations, and non-patent literature across multiple databases. Here is the workflow that gets you from an IPC code to a cited report faster without pretending verification is optional.

Ready to try honest research?

Rabbit Hole shows you different perspectives, not false synthesis. See confidence ratings for every finding.