AI Research Assistant for Consultants: From Client Question to Defensible Brief

An ai research assistant helps consultants turn scattered sources into a defensible client brief with citations, contradictions, and confidence labels.

An ai research assistant is useful to consultants for one reason: client work punishes confident hand-waving. It is not enough to sound informed in a steering committee or slide review. You need to show what the claim is, where it came from, what contradicts it, and how much confidence you should have before a client commits budget, headcount, or strategy around it. Most consulting research still happens in a fragile workflow of tabs, copied notes, screenshots, and half-remembered sources. The bottleneck is not access to information. It is turning scattered evidence into a brief that survives scrutiny.

That tension is showing up everywhere in the current AI conversation too. Hacker News today was full of debate about tooling trust, privacy fallout, and whether faster AI workflows are making people more effective or just more certain. Consulting has the same problem. A smooth answer is not a strategy deliverable. A defensible brief is.

Consultants usually do not need more words. They need an AI research assistant that makes four things visible before a recommendation goes into a deck:

- The evidence trail: where each claim came from.

- The contradictions: what cuts against the main conclusion.

- The confidence level: which findings are solid versus directional.

- The next checks: what still needs manual validation before a client sees it.

That is the difference between a fluent answer and a defensible brief.

Why consultants need an ai research assistant, not just a faster chatbot

Consulting research is usually cross-functional by default. A client asks a question that sounds simple — Should we enter this market? Why are win rates dropping? How exposed are we to this competitor? — and the answer lives across earnings calls, review sites, analyst reports, hiring patterns, Reddit complaints, regulatory filings, product docs, and executive interviews.

That makes the usual one-window AI workflow weak in three ways:

- It hides provenance. The answer sounds polished, but nobody knows which claims came from a primary filing versus a forum post.

- It collapses contradictions. Consulting insight often comes from the mismatch between what companies say and what customers report.

- It is hard to reuse. Client work needs a memo, appendix, source list, and follow-up questions — not just a nice paragraph in a chat box.

An ai research assistant should behave more like an analyst team than a single narrator. It should pull from multiple source types in parallel, keep contradictory evidence visible, and return an artifact a consultant can work from.

What an ai research assistant for consultants should actually produce

The right output is not "a summary of the internet." It is a decision-oriented brief.

A strong consulting research artifact should answer:

- What is most likely true?

- What evidence supports that view?

- What evidence cuts against it?

- Which parts are high confidence and which are still directional?

- What should the team validate manually before putting this in front of the client?

That is what separates an ai research assistant from a generic writing tool. Consultants do not need more fluent text. They need structured evidence they can challenge.

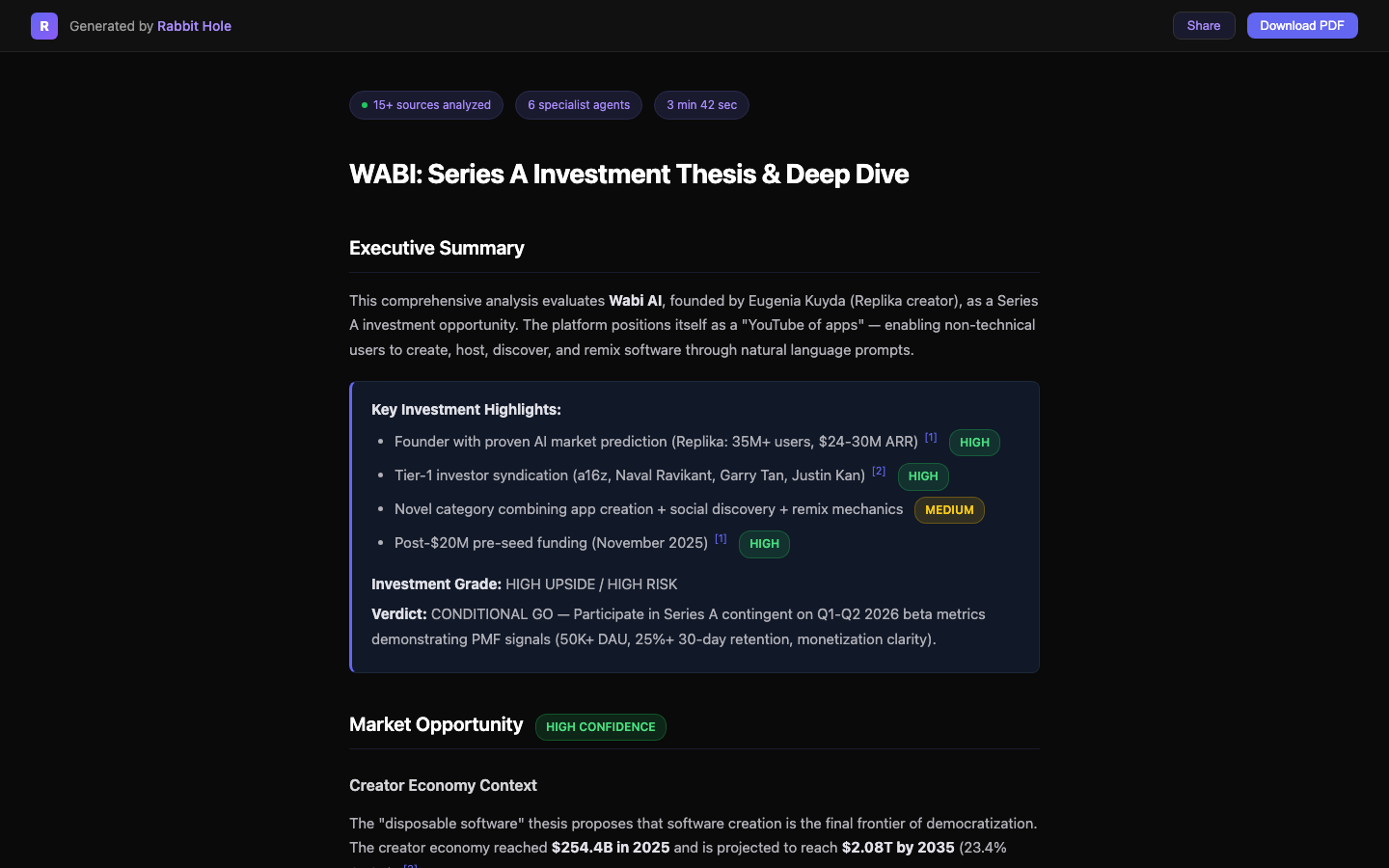

Here is what that artifact looks like inside Rabbit Hole — a report with cited findings, confidence badges, and a visible final verdict instead of an untraceable wall of prose:

The key design choice is not the layout. It is that the uncertainty stays visible. When a client asks, "How sure are we about this?" the research should already answer.

The best ai research assistant workflow starts with the client decision

The fastest way to get bad output is to start broad.

Bad prompt: Research the warehouse automation market.

Better prompt: Build a consultant-ready brief on whether a mid-market ERP vendor should expand into warehouse automation in 2026. Compare market demand, incumbent positioning, customer complaints, implementation friction, regulatory constraints, and likely buyer objections. Separate strong evidence from weak signals.

That instruction forces the ai research assistant to work backward from the decision, not forward from the topic.

A practical consulting workflow looks like this:

- Frame the question. Turn a vague client ask into explicit decision criteria so the output stays decision-oriented instead of generic.

- Search in parallel. Pull filings, analyst notes, customer sentiment, docs, and public discussion at the same time to reduce tab chaos.

- Surface contradictions. Flag where company claims and market evidence diverge, because that tension usually contains the real insight.

- Structure the brief. Return findings, sources, confidence, and open questions in a format a consultant can reuse in slides or memos.

That process matters because consulting speed is deceptive. The problem is rarely that a team cannot find enough information. It is that they cannot integrate it fast enough to shape a recommendation before the meeting starts.

Where an ai research assistant helps consultants most

AI research assistant for market-entry work

When a client is considering a new category, the research job is not to generate a giant market landscape nobody will read. It is to answer whether demand is real, the buyer pain is urgent, and the market is crowded in the wrong places. For a deeper breakdown of that workflow, see AI Market Research Tool, which shows how to compare buyer pain, pricing patterns, review-site evidence, and shortlist signals before a recommendation turns into a client bet.

AI research assistant for competitor strategy

A lot of competitive work fails because teams collect homepage claims instead of buyer evidence. A useful ai research assistant compares how competitors position themselves, how customers talk about them, where pricing differs, and where dissatisfaction keeps leaking out. That is the material strategy teams actually need. Rabbit Hole’s AI Competitor Analysis piece goes deeper on that use case.

AI research assistant for diligence-heavy projects

Consultants working on growth strategy, vendor selection, M&A screening, or private-equity support need a research trail that can survive a partner review. That means keeping citations close to claims and marking the parts that still need firsthand validation. If the assignment leans more toward transaction work, AI Due Diligence is the adjacent Rabbit Hole workflow.

Where most AI research assistant outputs still break down

Even good tools can mislead if teams use them lazily.

They mistake compression for understanding. A shorter report can feel clearer while quietly removing the exact nuance the client needs.

They flatten source quality. A quote from an earnings transcript and a complaint from one forum user should not carry the same weight.

They make uncertainty disappear. The more polished the language, the easier it is for weak evidence to masquerade as strong evidence.

That is why an ai research assistant should not just retrieve. It should expose its reasoning structure in practical terms: source type, confidence level, tension points, and the questions still open.

AI research assistant FAQ for consultants

What should an AI research assistant for consultants include?

An AI research assistant for consultants should include cited claims, source-type visibility, contradiction tracking, confidence labels, and open questions for manual validation. If the output cannot survive a partner review or client challenge, it is not ready.

Is an AI research assistant enough for consulting deliverables on its own?

No. An AI research assistant should accelerate evidence gathering and synthesis, but consultants still need to validate critical claims, pressure-test assumptions, and tailor the recommendation to the client context. The tool should strengthen judgment, not replace it.

What consulting projects benefit most from an AI research assistant?

The best fit is work where the answer spans multiple source types: market-entry research, competitor strategy, vendor evaluation, and diligence-heavy briefs. Those are exactly the cases where a single-chatbot workflow tends to hide source quality and contradictions.

Why Rabbit Hole fits consulting work

Rabbit Hole works as an ai research assistant for consultants because it treats research as a parallel evidence problem. Multiple agents can search different source types at the same time, then return a report with cited findings, contradictions, and confidence scores. That makes the output usable in the actual consulting workflow: partner memo, client brief, strategy deck, or working session.

The value is not that it saves a few minutes on note-taking. The value is that it helps a team move from "we found some things" to "here is the argument, here is the evidence, and here is what we still need to verify."

If you are still deciding between the mainstream options, compare the trade-offs directly in Best AI Research Assistants for 2026, especially the workflow-risk chooser for client memos versus quick scans, or use the adjacent workflow in How to Verify AI Research.

Sources and adjacent reading

- AI Market Research Tool

- AI Competitor Analysis

- AI Due Diligence

- How to Verify AI Research

- Best AI Research Assistants for 2026

That is the real bar for consulting research. If you want an ai research assistant that produces artifacts your team can inspect instead of answers you have to trust on faith, try Rabbit Hole.

Related Articles

The 2026 Buyer's Guide to AI-Powered Research Assistants

The best ai-powered research assistant in 2026 depends on whether you need a fast answer, a literature workflow, or a report you can actually defend after the meeting.

ChatGPT Deep Research vs Perplexity vs Rabbit Hole: Which One Cites Sources That Actually Exist?

If a deep research tool gives you a polished paragraph with one dead link or one unsupported claim, the report is already compromised. Here is the citation test that matters.

AI Patent Search: From IPC Code to Cited Report in 5 Minutes

Patent search is not one query. It is text, classification, citations, and non-patent literature across multiple databases. Here is the workflow that gets you from an IPC code to a cited report faster without pretending verification is optional.

Ready to try honest research?

Rabbit Hole shows you different perspectives, not false synthesis. See confidence ratings for every finding.